Learning to Learn Has Always Meant Something Different After Every Revolution

And what it means for workforce development

Fourth week of “Learning That Feels Good,” and I want to talk about something I’ve been thinking about a lot lately.

Every L&D professional I talk to is asking the same question: “How do we help people work effectively with AI?” But I think we’re asking the wrong question.

The right question is: “How do we help people learn when AI can instantly know anything?”

This isn’t about students doing homework with ChatGPT. It’s about your workforce, whether they’re developing the capacity to learn new skills independently, or becoming dependent on AI to do their thinking for them.

Because here’s what I think we need to talk about more directly: AI isn’t only changing what competencies organizations need. It’s changing what “learning to learn” fundamentally means for working professionals.

We’ve Been Here Before (Sort Of)

AI is what economists call a “general purpose technology”, like electricity, the printing press, or the steam engine. Technologies so transformative that they don’t just improve what we do; they restructure how we think about doing it.

And every time one of these technologies arrives, it changes what “learning” means

.The printing press (1440s): Before Gutenberg, learning meant memorization. Monks spent lifetimes committing texts to memory because books were rare and precious. After printing? Suddenly memorization mattered less. Learning shifted to comprehension - not whether you could recite Aristotle, but whether you understood him.

Tom Wheeler at Brookings captures this perfectly: Gutenberg “released ideas and information from captivity.” Knowledge moved from being scarce to being accessible. The skill of learning changed accordingly.

Electricity (1880s-1920s): Learning didn’t just move from candlelight to electric light. The factory system that electricity enabled transformed education itself. We invented the modern school system - age-based grades, standardized curricula, bells ringing every 50 minutes - because we needed to prepare workers for factory jobs. Learning became synonymous with compliance and following instructions.

The Internet (1990s-2000s): Google arrived, and suddenly “knowing facts” became less valuable than “knowing how to find facts.” Learning shifted again - from retention to retrieval. Why memorize the capital of Peru when you can look it up in 3 seconds?

Every general purpose technology redefines the core skill of learning. Memorization → Comprehension → Following instructions → Information retrieval.

So what does AI redefine learning as?

And more importantly for L&D: What does it mean to “learn to learn” when the next skill your workforce needs might not even exist yet, but AI will be able to perform it the moment it does?

Why AI Is Different?

Here’s where it gets uncomfortable.

Phil Rosen offers a provocative framing that I can’t stop thinking about: “ChatGPT does the opposite of the printing press.”

The printing press enabled a profusion of knowledge. More books, more ideas, more access. It expanded what people could learn.

AI does something qualitatively different. It doesn’t expand knowledge - it distills it. It takes massive information and compresses it into bite-sized, confident-sounding answers.

Rosen’s warning: “Human understanding is under no obligation to increase alongside these developments.”

Read that again.

We can look smarter without being smarter. We can produce better work without learning anything. We can get the right answer without understanding why it’s right.

And that’s the crisis hiding in plain sight.

What The Research Is Actually Showing

I spend a lot of time reading learning science research. And there’s a pattern emerging across contexts,from corporate training to classrooms,that should concern every L&D leader.

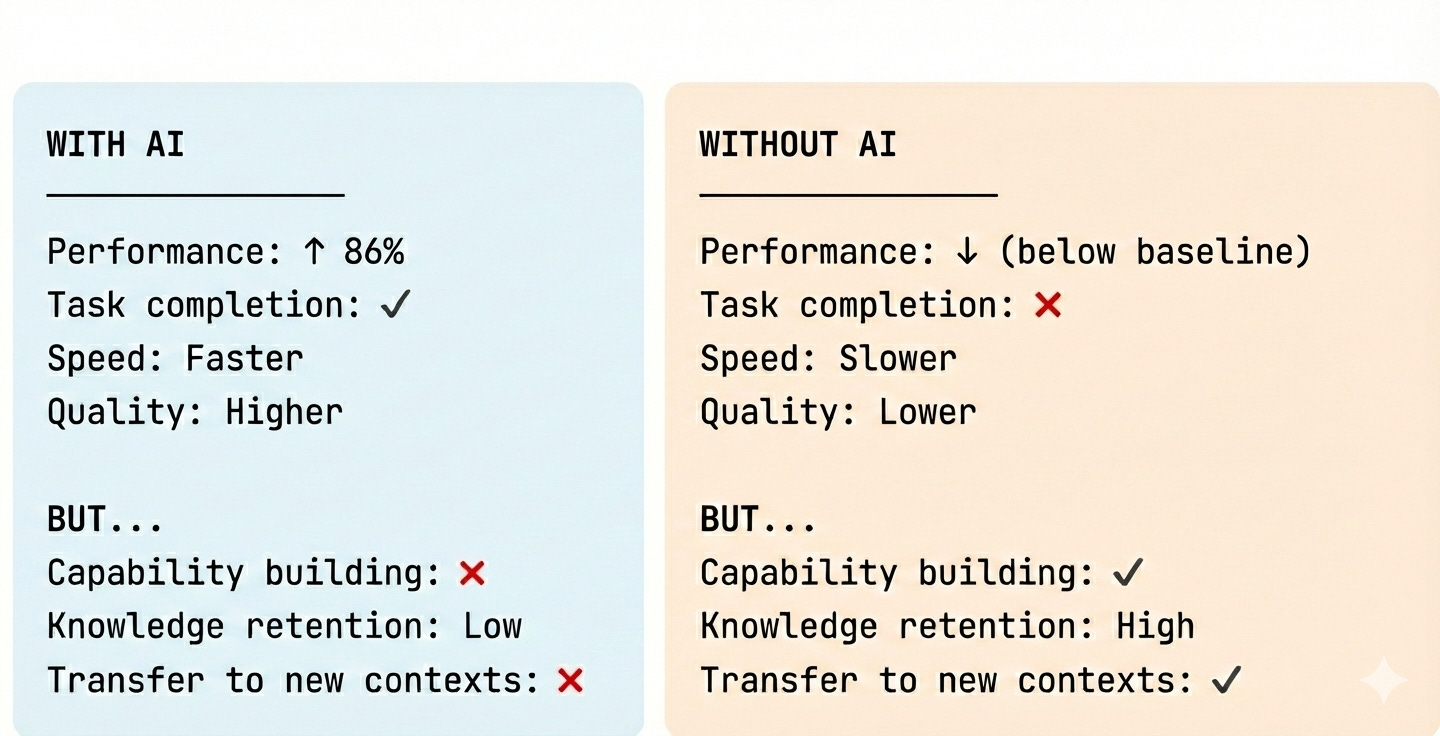

BCG Henderson Institute, 2024 (consultants using AI for work tasks): Knowledge workers using GenAI achieved 86% of data scientists’ benchmark performance on coding tasks versus 29% without AI. Impressive productivity gain, right?

But here’s what the researchers found: “Doing with AI” does not equal “learning to do.” Participants who used GenAI to complete tasks didn’t retain the underlying knowledge. They could perform while using AI. They couldn’t perform without it.

The research team’s conclusion: “This suggests that the AI may have been used as a crutch rather than a tool for learning.”

This doesn’t mean AI can’t enhance learning. A Harvard Physics study (2025) found students using a carefully designed AI tutor learned more than twice as much as those in active learning classrooms, because the AI was designed to promote thinking, not replace it. The difference? Intentional learning design.

This pattern isn’t unique to corporate settings. A rigorous study from Wharton/Penn (2024, peer-reviewed, published in PNAS) found the same dynamic with ~1,000 students using ChatGPT for math practice.

Students using ChatGPT improved practice performance by 48%. Then they took the test without AI and scored 17% worse than students who never used ChatGPT.

The researchers watched how students used it. They didn’t use AI to think better. They used it as a crutch. They copied solutions. They skipped the struggle. They optimized for task completion, not understanding.

They got better at doing with AI. They got worse at learning.

A preliminary MIT Media Lab study (2025, not yet peer-reviewed, 54 participants) found similar brain activity patterns: ChatGPT users showed 34–48% less neural connectivity than those working independently. Over 80% couldn’t recall key content from their own work.

The study is small and limited to essay writing, but combined with the BCG and Wharton findings, a consistent pattern emerges across contexts.

Here’s what this means for L&D:

Performance with AI ≠ Capability without AI

When your employees complete AI-assisted training modules with 95% scores, what have they actually learned? When they use AI to draft reports, create analyses, or solve problems,are they building expertise or outsourcing thinking?

Most importantly: Are they learning how to learn new skills independently, or are they learning how to use AI?

Those are not the same thing.

What “Learning to Learn” Actually Means Now

So if AI changes what learning means, what’s the new skill?

The research keeps pointing to the same answer, though everyone phrases it differently:

Ethan Mollick: “The path to expertise requires a grounding in facts. Basic knowledge enables you to see patterns, catch errors, and evaluate AI output.”

Sal Khan: “The AI should be Socratic by design - it refuses to give direct answers. It asks: ‘What do you think is the next step?’”

James C. Kaufman (UConn, 2026): “AI is much better at generating ideas than evaluating them. If AI produces work at a B+ level, someone already working at an A level can use it selectively. But someone below that? AI becomes their ceiling.”

Here’s my synthesis:

Learning to learn in the age of AI means:

Building foundational knowledge first - because you can’t evaluate what AI says if you don’t know enough to spot errors

Developing judgment - the ability to recognize when AI helps and when it hinders genuine understanding

Preserving productive struggle - because the neural pathways that support thinking only form when you actually think

Or, as Robert Pondiscio puts it: “Students who rely on AI to write an essay may submit excellent work, but they have not done excellent thinking.”

And here’s Daniel Willingham’s neuroscience principle that every L&D professional should memorize:

“Memory is the residue of thought. If AI eliminates the need to think, it also eliminates the opportunity to remember.”

The Framework L&D Can Actually Use

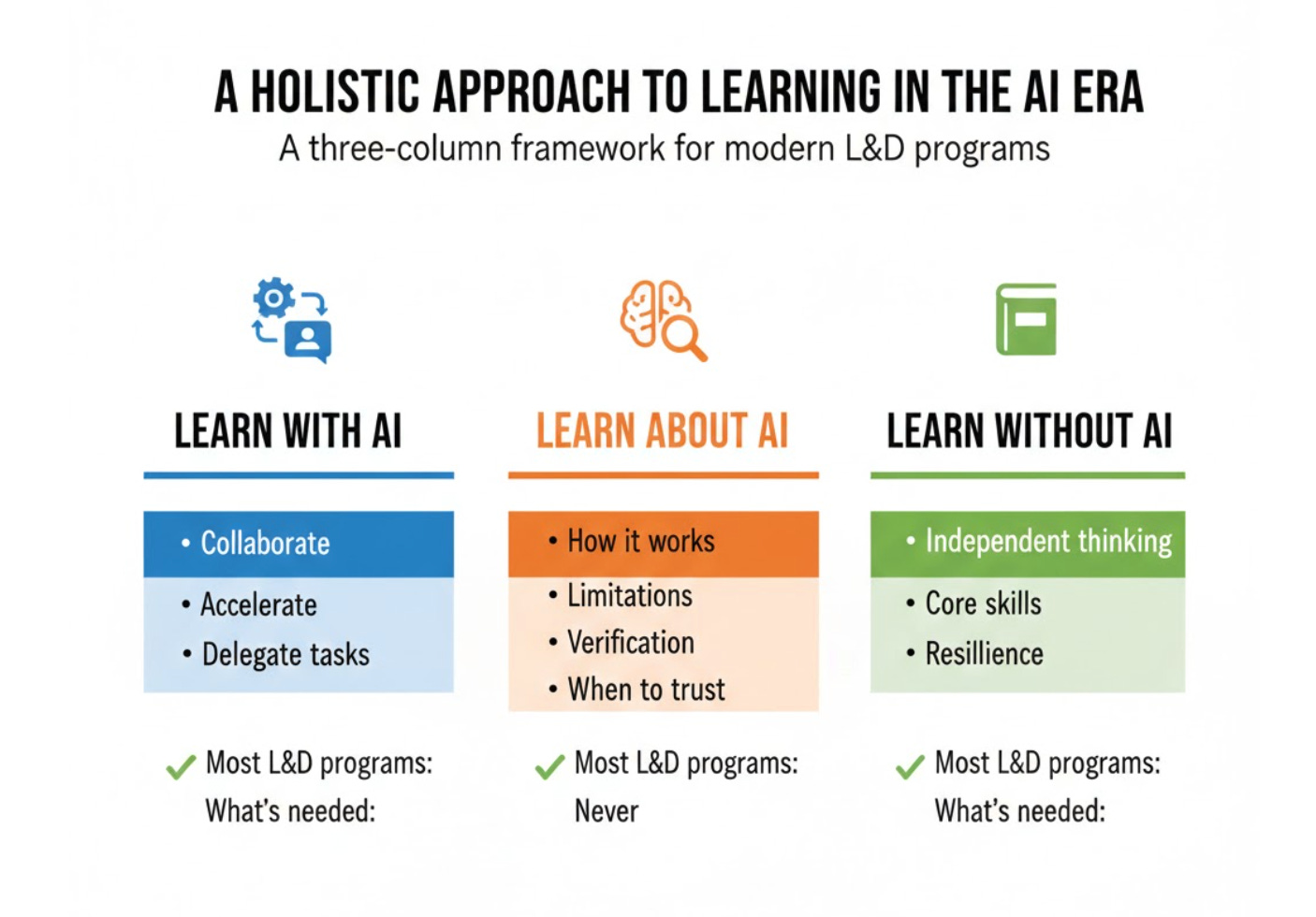

The most practical framework I’ve found for L&D comes from a 2025 paper in Inside Higher Ed, but it translates perfectly to corporate training. Three words:

WITH / ABOUT / WITHOUT

Learn WITH AI: Design experiences where employees collaborate with AI as a thinking partner. What tasks does AI genuinely accelerate? When should you delegate work to AI?

Learn ABOUT AI: Build understanding of how AI actually works. What are its limitations? Where does it hallucinate? How do you verify its outputs? What does “85% confidence” actually mean in practice?

Learn WITHOUT AI: Protect spaces for independent thinking. Because if employees always use AI for a skill, they never build the thinking capacity that lets them function when AI isn’t available,or when it’s wrong.

Most L&D programs right now are doing only the first one. They’re teaching people to use AI for their jobs. But they’re skipping the “about” and ignoring the “without.”

And that’s how you get the BCG result: employees who perform great with AI but can’t function without it.

Worse: employees who don’t know they can’t function without it, because their performance metrics look fine.

What The Adoption Data Shows

Here’s where we are right now:

80% of L&D professionals view AI as important. But only 25% factor it routinely into learning strategies. (Training Industry, 2026)

Employees are adopting AI three times faster than their leaders. They’re using it for work tasks, for learning, for problem-solving. And L&D has no visibility into what they’re actually learning versus what they’re just offloading.

39% of core workforce skills will change within five years. (World Economic Forum, 2025) But if your employees are using AI to perform those skills rather than learn them, what happens when the skills change again?

What does this tell us?

We’re in the messy middle. People are using AI. But L&D hasn’t figured out how to integrate it in ways that actually build capability instead of dependency.

And the longer we optimize for performance metrics without protecting learning capacity, the bigger the capability gap we’re building.

The One Thing I Want You to Take Away

If you’re designing learning experiences,whether you’re building training programs, evaluating vendors, or advising business leaders on L&D strategy,here’s the shift you need to make:

The question shifts from: “How can AI make this faster/easier/more engaging?”

To: “Where in this learning experience does AI genuinely support capability building, and where might it replace it?”

Because here’s what the research is showing us:

Performance improves. Capability building degrades. These are not the same thing.

AI can make employees look more competent without making them be more competent.

The organizations that win will be the ones that understand how to integrate AI in ways that build capability, not replace it, knowing which tasks AI should handle and which require human cognitive effort.

Learning to learn in the age of AI doesn’t mean learning to use AI.

It means developing the metacognitive capacity to know what you know, what you don’t know, and what kind of help you need.

It means building judgment about when AI accelerates genuine learning and when it’s just offloading thinking.

It means designing L&D experiences where AI handles the busywork so employees can focus their cognitive effort on the challenges that actually build expertise, not struggling with tasks AI should handle, but engaging deeply with the thinking AI can’t do.

Until Next week: Look at one learning experience your team is designing or one vendor solution you’re evaluating and ask yourself, does this build employees who can think critically with or without AI, or does it create reliance on AI for thinking they should do themselves?

The answer might surprise you.

If this resonated, please share it with an L&D colleague who’s navigating AI in their programs. And if you want to talk through how these principles apply to your specific L&D challenges, reply to this email. I read every one.